MIE444 Maze Rover

University of Toronto | MIE444 Mechatronics | Fall 2025

Designed and implemented a complete autonomous control system for a rover that navigates a 4×8 gridded maze, locates a wooden block, and delivers it to a target location—all running onboard a Raspberry Pi 4 in Rust.

My Role: 100% of software architecture, algorithms, and implementation Tech Stack: Rust, Tokio async runtime, RPLIDAR A1M8, MPU6050 IMU, React + Konva Video: Simulation and maze trials

Results

Milestone 2 (Nov 10): Autonomous maze navigation from random positions—zero collisions, zero failures

Milestone 3 (Nov 18): Full block pickup and delivery mission completed (manual control, full marks)

Performance:

- Localization: 55 ms grid alignment + 1 ms cell ID per scan

- Sensor fusion: 200 Hz IMU + 5.5 Hz LIDAR

- Navigation planning: <1 ms (A* on 32-cell grid)

- Box detection: 2.5 ms, ~4 mm accuracy

Technical Approach

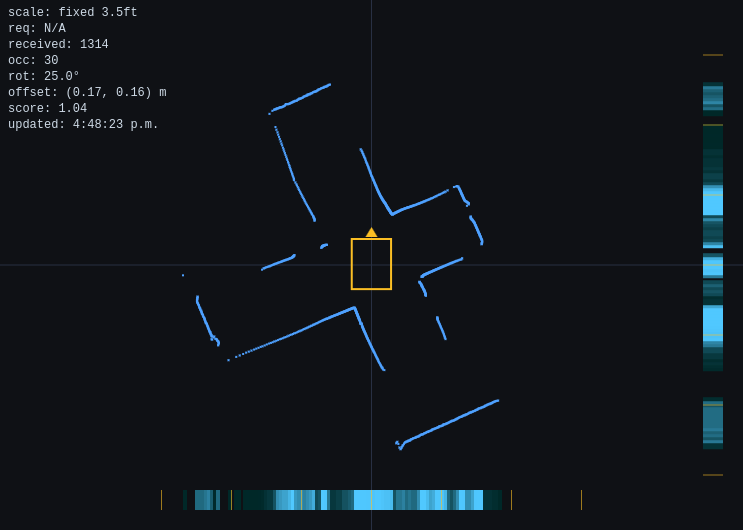

Two-Part Localization

Challenge: Determine position in 32-cell maze using single LIDAR sensor

Part 1: Grid Alignment (sub-cell positioning)

Single-bin Fourier transform detects 1 ft grid periodicity in projected LIDAR points. Magnitude indicates alignment quality, phase encodes offset.

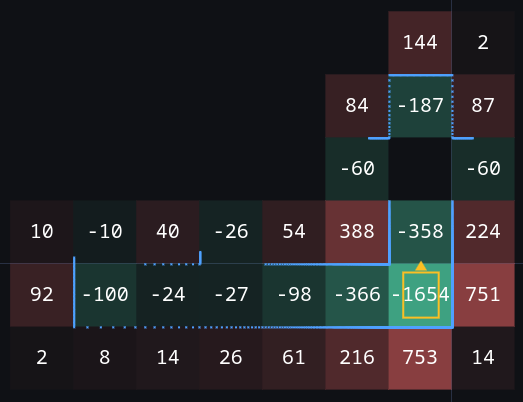

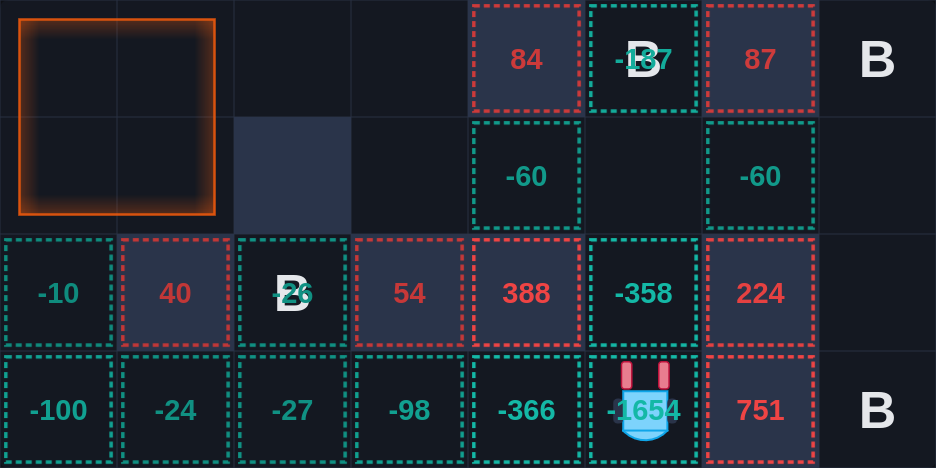

Part 2: Kernel Matching (cell identification)

Build occupancy grid with dual evidence (positive for walls, negative for free space). Slide across all 128 hypotheses (32 cells × 4 rotations). Match scores: 90-98% for correct cell.

Performance: 56 ms localization processing per LIDAR scan, O(N) complexity

Sensor Fusion: 200 Hz IMU + 5.5 Hz LIDAR

Problem: LIDAR-only has 180ms latency and motion distortion (robot rotates 23° during scan)

Solution:

- Gyro integration at 200 Hz (drift <1-2° over 5 min)

- Velocity-gated LIDAR corrections (only when |ω| < 0.1 rad/s for heading)

- Linear Kalman filter for position with centripetal acceleration model

- 600 ms staleness timeout pauses navigation if LIDAR fails

Architecture: OS threads for blocking I/O, Tokio watch channels for multi-rate fusion, async mission control at 50 Hz

Navigation: A* + Proportional Control

A* pathfinding on 4×8 grid (<1 ms), waypoint filtering (20 cells → 4-5 turns), proportional control at 50 Hz. Forward speed gated by heading error (scales by cosine).

Alternative approaches abandoned:

- Dynamic Window Approach: computationally complex, collision checking issues

- Pure Pursuit: unnecessary complexity for grid waypoints

- P-only proved sufficient with absolute LIDAR positioning

Box Detection: LIDAR Template Fitting

Spatial mask to loading zone (2×2 ft), L-template fitting for rectangular block, temporal filter (2-of-3 voting + EMA smoothing).

Performance: 2.5 ms processing, ~4 mm accuracy Replaced: Three VL53L0X ToF sensors (couldn’t reliably detect block)

Software Architecture

Layered design:

- Interface → Mission → Control → Hardware

- Trait-based abstraction: all sensors/actuators with real + simulated implementations

- Single mode switch:

HardwareMode::Real | Simulation - Enabled algorithm development on laptop without hardware access

Frontend: React + Konva, WebSocket multiplexing (20 Hz pose, 5 Hz LIDAR, 2 Hz mission state)

Key Decisions

Simplicity over sophistication: Abandoned ESKF for complementary filtering + linear Kalman. Abandoned DWA for proportional control. Simple approaches met requirements with less complexity.

Single-sensor consolidation: LIDAR repositioned beneath chassis for dual-purpose (localization + box detection). Eliminated ToF sensors, better precision.

Hardware abstraction investment: Enabled parallel simulation/real development, rapid testing with recorded LIDAR data, eliminated deploy-to-hardware cycles.

Learnings

Measure before choosing algorithms: Measured gyro drift (1-2° over 5 min) validated simple fusion. ToF testing revealed detection issues, enabled fast pivot to LIDAR-based approach.

Fast abandonment: DWA abandoned in one day, ToF after initial testing—freed time for successful alternatives.

Value of dedicated test environment: Built full-scale maze at home, enabled frequent iteration during integration.