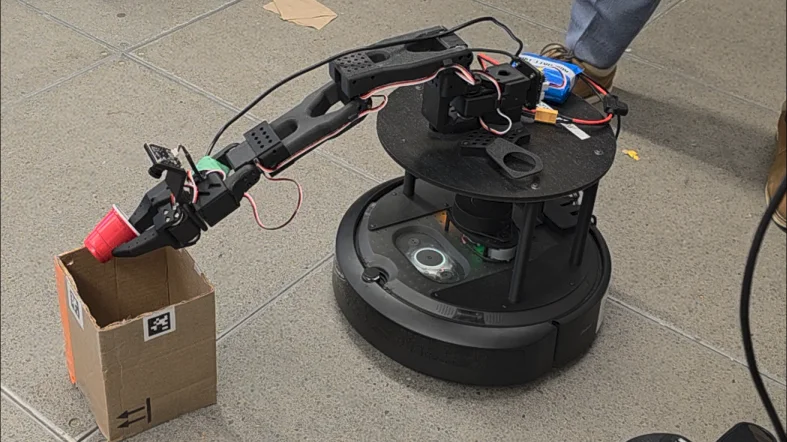

MIE443 Autonomous Pick-and-Place Robot

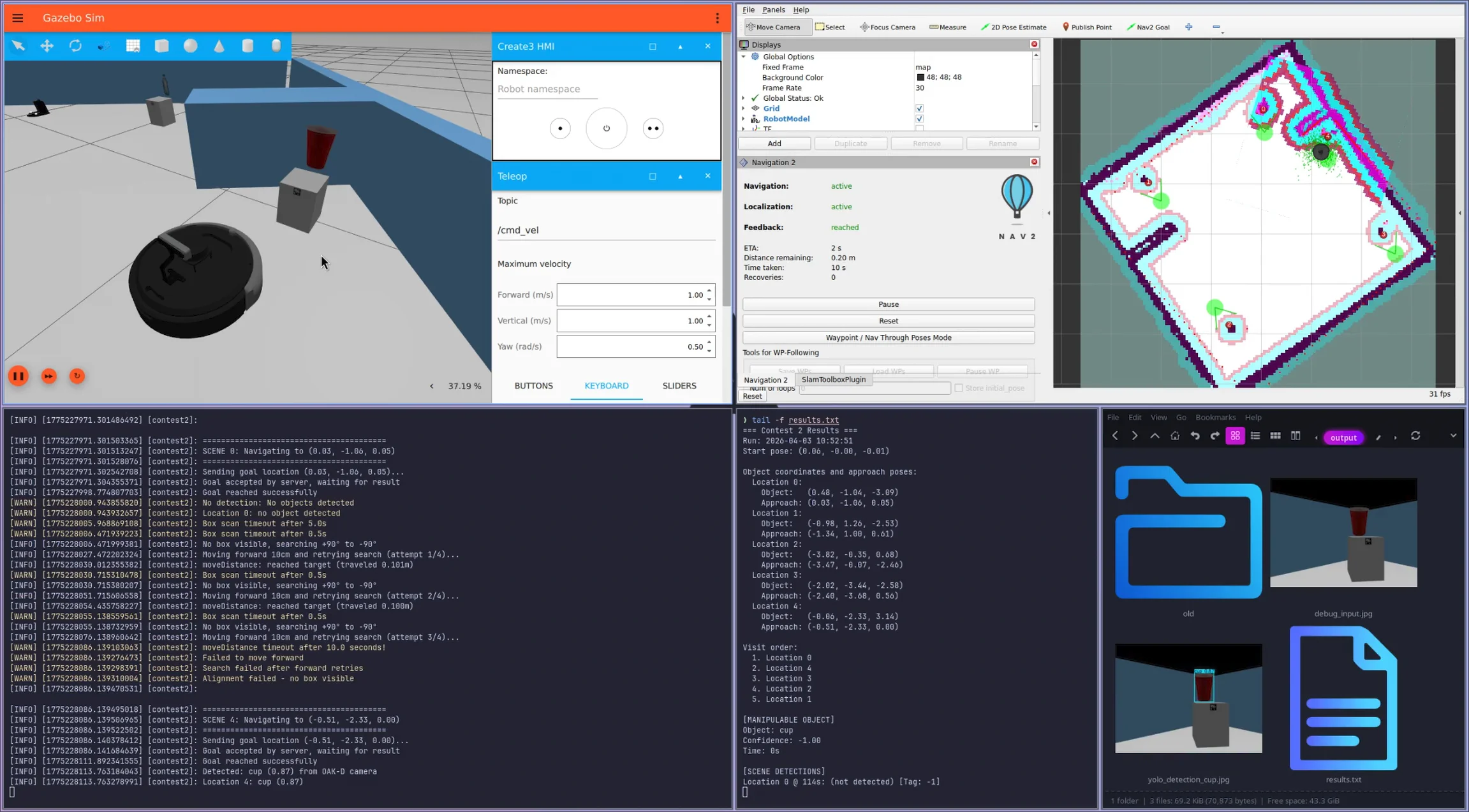

Designed and implemented a fully autonomous mobile manipulation system that locates, classifies, and places household objects into correct bins within a 5-minute time limit. The system integrates perception (YOLO, AprilTags), navigation (Nav2, AMCL), and manipulation (MoveIt2) in a hybrid deliberative/reactive control architecture.

Technical Challenge

The robot must:

- Pick up an unknown object from its base plate

- Navigate to 5 scene locations in an optimal order

- Identify objects at each scene using vision

- Match the carried object to the correct bin

- Perform precise placement using a 5-DOF robotic arm

- All autonomously, with no human intervention

Key Implementation

Route Optimization: Brute-force TSP solver using actual Nav2 path costs (not Euclidean distance) to minimize traversal time. Queries the planner for all pairwise distances, then evaluates 120 permutations to find optimal visit order.

Visual Servoing: Proportional controller for fine alignment to AprilTag-marked bins. Uses TF2 transforms to compute box center from tag normal, achieving ±1cm positioning accuracy.

Recovery Behaviors: Rotational search patterns + incremental forward movement when targets not visible. Bumper sensors integrate with Nav2 costmaps for collision avoidance during blind movements.

Distributed YOLO: Custom service architecture runs YOLOv8n inference on laptop while image capture happens on-robot (RPi4), with compressed network transmission. Largest-area selection prevents background object confusion.

Simulation Infrastructure: Built custom Gazebo world from scratch - converted contest PGM maps to SDF, created textured billboard objects, added AprilTag models. Enabled full control loop validation without physical hardware access.

Tech Stack

Languages: C++17, Python 3 Frameworks: ROS 2 Jazzy, Nav2, MoveIt2 Perception: YOLOv8n, AprilTag detection, OAK-D stereo camera Hardware: TurtleBot 4 Lite (Create3 base, RPLIDAR), SO-ARM101 arm Tools: Gazebo, RViz, TF2, Docker

Results

Successfully completed full autonomous sequence in simulation. Custom modules (alignment, arm operations, motion control, bumper processing) integrated seamlessly with Nav2/MoveIt2. System handles detection failures gracefully through multi-stage recovery.